How to Raise $60 Million: The Secret Weapon Obama’s Team Used in the 2008 Election

Sometimes, little things add up to be big things.

As a nonprofit, you probably know this principle well. Thousands of small contributions add up to the big dollars that allow your organization to do the work it does.

When it comes to your website, that same thing is true. Details—the little things—can make a huge impact on the metrics that matter most. Like how many people donate through your site, sign up for your events, or subscribe to receive emails from you.

During the 2008 presidential election, Barack Obama’s digital team proved the immense value of tiny details by showing how their testing efforts lead to a 40% lift in email sign ups. These additional sign ups eventually generated an estimated $60 million in additional donations for the campaign—big money.

They generated $60 million in additional funding by changing some words and pictures on a landing page. It’s pretty amazing.

Little things like colors, words, and design can make all the difference. And your organization can adopt the same testing principles and strategies that the Obama team used to generate more email sign ups, event registrations, or online donations.

Designing a test

In order to maximize your effectiveness and improve the accuracy of testing, you should strive to adhere to a scientific method for experimentation. This process was designed to make things repeatable and as accurate as possible, while controlling for any outside influence that would otherwise alter the outcome of an experiment.

Start with a hypothesis

Let’s take it way back to high school science class.

Every effective experiment should start with a hypothesis. This is how you give structure, consistency, and clarity to your experiment. Your hypothesis is the single change that you want to try in order to attempt to improve a specific outcome. It should be in the form of an assertion.

So, you might hypothesize: Making the “Donate” button red will increase the number of people who click on it.

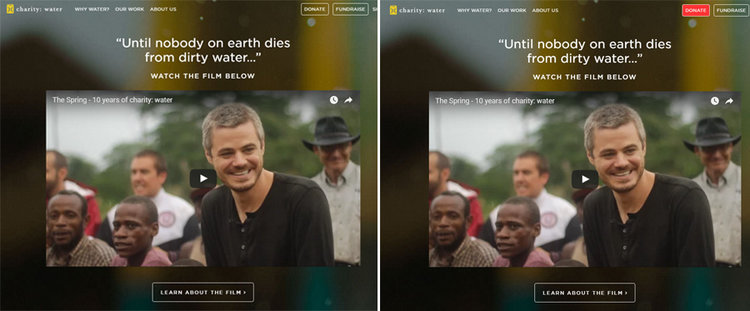

Charity: Water’s website with one change.

Set your control and test version

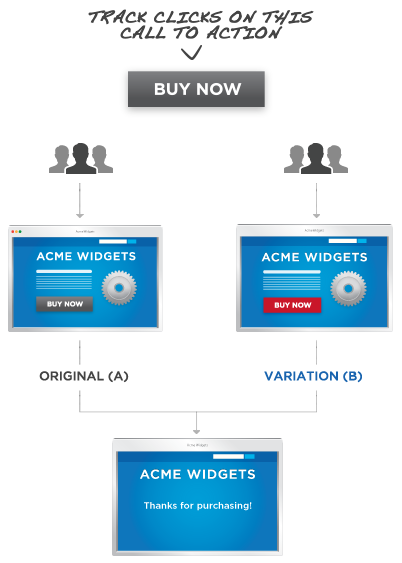

When running tests such as this, there are two basic types of tests: A/B testing, which tests two scenarios at a time, and multivariate testing, which tests multiple scenarios simultaneously.

For the sake of simplicity and ease of execution, we are going to do an A/B test, meaning that we test the original version (control) against a single alternate version (variation). This is a pretty simple test and allows us to focus all of our time and attention on just two scenarios.

Source: Optimizely.com

It’s great for measuring things like the effect of a specific color, piece of copy, or placement choice. You can also use it to test two very different page designs against each other.

In the case of this experiment, your two scenarios are fairly obvious. One scenario—your control—is the original version of the page, with a transparent “Donate” button. Your “B”—or variation—is the version with the red button.

The control serves as a baseline. It’s what you’re starting with and hope to improve upon. So, say, in this experiment, we prove that the red button increases clicks by 5%. Then, we update the website to make the button red all the time. Now, if we decide to run another experiment (and you should) to test the effect of a green button, then we would test it against the red button as our new control.

Define your measurement

Ultimately, you are testing in order to improve or change some outcome—either quantitative or qualitative.

In the case of the button color, the outcome is immediate and obvious. We would need to track the percentage of people (remember to measure in percentages rather than raw counts) who click on the “Donate” button\. That’s really it.

But some tests are not quite as straightforward.

For example, say you wanted to improve the engagement level on your website. That’s a pretty abstract measurement—and it requires you to define how you’ll measure it before you start the experiment. In this case, you might look at something like time on site or average pages per session for users who landed on that particular page. These are different measures of engagement and give you a way to quantify—even if by proxy—how engaged users are with your page.

Executing your experiment

Okay, you’ve got a beautiful experiment designed and you’ve nailed down exactly the right measurement to give you a picture of the outcome.

Now what? How do you actually do the experiment?

Luckily, you don’t have to be a programmer get a test up and running. I would recommend using a tool like Optimizely. It requires a single snippet of code to added to your website, and then it has a click-and-edit interface for you to set up variations and test them accordingly. They also have a free plan that’s perfect for learning how to set up and run experiments.

Even better, it lets you set goals for specific experiments and track results—even calculating the statistical significance and letting you know when one variation is deemed a clear winner. It’s like A/B (or multivariate) testing in a box.

What you should test on your website

There are a huge number of variables to test on any given page or website. In fact, it can seem daunting to think of all the possible things that could be changed on your site.

And that is exactly why testing and optimization is not a one-time thing. It’s a process of continuous improvement, and it should be treated as such. You don’t need to test and compare every possible variation in one week.

The nice thing is you can narrow this down by focusing on the items that are most likely going to impact your organization the most. Changing your “News” section to be called “Blog” may change how many people click on the link, but will it move the needle in terms of the metrics that matter? Probably not.

Start by targeting high-impact areas:

- Donation links / pages

- Subscription forms

- Event details / registration pages

- Other calls to action specific to your organization

Then, hone in on elements of that page or form that matter most or could be a potential barrier. Some ideas might be:

- Required fields on a form – Do you really need to ask that? Would your conversion rate improve if your form was made simpler?

- Calls to action – Try different colors or text to see what gets the highest click-through

- Images – Does using a different photo or graphic increase engagement?

- Design / Layout – Should you put the call to action above the fold or under the details?

- Page elements – Does adding quotes from other constituents generate more (or larger) donations?

All of these are possible. The key to test things in a consistent, systematic way to improve performance over time.

Use a tracking sheet to organize and execute on the experiments you come up with.

Culture of experimentation

One of the important lessons to come from Silicon Valley and the startup world over the past 20 years is the need to establish a culture of continuous innovation—a culture of experimentation.

And as a nonprofit, things should be no different.

Even if you don’t have the resources to staff a huge team of expert developers, your team can be innovative and experimental in small ways like A/B testing various components on your website.

Some key components to establishing this kind of culture in your organization:

1. Never assume what’s best

How many times has your organization had an internal debate about whether to put this button there or which photo to use on what page?

One long-standing tradition in business is that you make decisions and move on. But with a culture of experimentation, that’s not how it works. You make a choice—quickly— and then commit to testing alternative options to see which one works best. You may have an opinion about which will be most effective, but you could easily be surprised to learn that you guessed wrong.

2. Build, test, change

In a culture of experimentation, things are never “done”. Your website isn’t done the day it launches. That’s just the beginning.

You should embrace a culture of continuous testing and improvement because even small boosts can compound into huge gains for your organization over time.

3. Take time to reflect

Most tech companies now embrace an agile methodology that basically outlines one- or two-week “sprints” of work, and then take time at the end of every sprint to assess what’s been accomplished.

This is hugely important for testing as well. After outlining specific tests and then executing them, you should always have time to reflect upon both the process and the findings to see what you’ve learned and what you can change moving forward.

4. Be collaborative

Improving the performance of your website isn’t just the responsibility of one person or one department. It’s the mission of the entire organization.

Whoever takes charge of running tests should gather ideas and input from as many people as possible and from as many departments or business units as he or she can. Not only can having more input generate more ideas, but having a different perspective can lead to breakthrough ideas or discoveries.